Northeastern London panel of experts navigate the perils of artificial intelligence in modern society

LONDON—Does artificial intelligence have the capacity to be creative? Should AI be regulated by nation states? What are the limitations of AI in business?

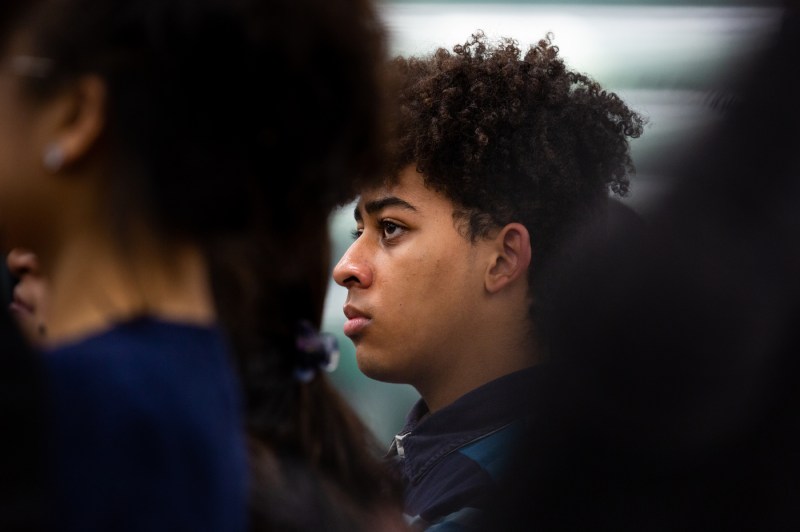

These were just a few of the questions that a panel of experts explored as part of “When Technology Goes Wrong: Navigating the Perils of AI in Modern Society.” Held Tuesday at Devon House at Northeastern University London, the panel prompted attendees to think about the challenges presented by AI technologies.

Northeastern London professors on the panel included Alice Helliwel, Sian Joel-Edgar, Yu-Chun Pan, Tobias Hartung and Xuechen Chen. Each represented a different field that intersected with AI. Moderated by Alexandros Koliousis, an associate professor of computer science at Northeastern London, the panel inspired the over 100 attendees to think about AI from a variety of perspectives—philosophy, mathematics, global politics and more.

“Our panel today is a celebration of local talent,” said Koliousis, also a research scientist at Northeastern’s Institute of Experiential AI. “The Northeastern University London faculty is at the forefront of interdisciplinary research.”

Helliwell is an assistant professor in philosophy who specializes in the philosophy of artificial intelligence, ethics and aesthetics. She started the discussion with the question of whether artificial intelligence can be considered creative.

Like many questions in philosophy, she said, it depends on who you ask.

“This kind of splits in two ways,” Helliwell said. “Some people think only humans can be creative, whereas others really think, I’ve seen the outputs of these generative systems; they certainly seem to be creative.”

In order to answer this question, she said, we need to define creativity—but this is also a question that isn’t easy to answer. One theory is that something creative needs to have the impact of surprise, it needs to have novelty, and it needs to have value. Others would say the machine must have agency in order to be creative.

“It kind of depends on which view you think is correct about what creativity is,” she said.

Helliwell touched on the issue of AI-assisted creative work and whether that counts as being creative. For some artists, AI is actually limiting, she said.

“Some artists also suggested that using an AI system is taking away some of their own artistic agency,” she said. “So they’ve given it a go and they think that actually limits what they’re able to produce.”

Many consumers are acutely aware of these limitations, according to Pan, an associate professor of digital transformation. He spoke about the business side of AI, specifically how it’s been incorporated into company operations.

Pan started by asking the audience whether they’d ever used a customer service chatbot and if it had given them the results they needed. The audience murmured.

“It does, sometimes. Give it some credit,” he said, laughing.

Studies suggest that 60% of the time a chatbot is used, human intervention is still required in order to solve the problem, Pan said.

“We know those applications can be useful, and they aren’t useful sometimes,” he said. People tend to find workarounds when they don’t see the value in the AI that’s implemented in businesses, he said.

But just because you can do something, doesn’t mean you should.

Chen is an assistant professor of politics and international relations. She spoke about regulation, specifically whether digital spheres should be regulated by governments, or if cyberspace should be regulated by regional entities like the European Union.

One approach to this question is for multiple stakeholders—like European nations and the United States, for example—to apply fundamental rules of democracy and human rights to the areas of cyberspace, as well. Other countries like China or Russia “prefer a sovereignty-based approach and reprioritize, for instance, states’ rights, and they give more emphasis to the role of public authorities in government,” she said.

Should the public be involved in creating these AI applications? Joel-Edgar talked it through.

“There’s a wider question that humans should be involved because we decide whether the technology is good or bad,” she said. She also talked through the issue of explainability and the expectation that AI technology is understood by the general public.

“If you go to a doctor and he gives you a prescription, you don’t ask him, ‘well, can you explain?’ I don’t have to ask him to explain, ‘what’s your credentials?’” she said. “And so why do we expect that from AI solutions?”

Hartung rounded out the panel. The assistant professor of mathematics discussed how AI learns and makes decisions. By way of example, if AI is shown a picture of a cat, it won’t say definitively that it is a cat, he said. Instead, it could give an 85% chance that it is a cat, a 10% chance that it’s a dog, and a 5% chance that it’s an aircraft.

“Really, what the AI does is it tries to learn a probability distribution so that if I give you some data,” Hartung said. “The AI says, ‘OK, this is the most likely explanation of what this data is,’” he said.

Ultimately, the panelists left the audience with plenty of food for thought when it comes to the creativity of AI.

“AI is an area–nowadays, a meta-discipline–where development seems to move faster than society can keep up with,” Koliousis said. “New technology impacts society in complex ways, and its impact is not always uniformly positive. So, we need to stop and ask: ‘What will be the impact on people?’”