Babies respond to sign language. What does that tell us about human nature?

Take a minute to contemplate a sentence: Individually, each letter of each word doesn’t hold much significance. But put them together into words, organize those words into sentences, and then these symbols convey meaning.

You may never have seen the specific string of words in a given sentence before, but because you understand the abstract rules of language, your mind is able to figure out what the sentence is communicating. However, if the letters are shuffled around into gibberish that do not adhere to rules, you may not glean any sort of meaning from them.

The capacity for language is thought to be a key attribute that sets humans apart from other species on our planet. And humans seem to be pretty good at learning language from an early age. But scientists have debated why infants are so adept at that kind of learning.

That question has long been confounding because babies could be learning language by hearing their parents and others talk even before being born—and before scientists can study their behavior and comprehension. Are infants’ brains specially tuned to language, or is it simply speech that attracts their attention?

To get around that issue, Iris Berent, professor of psychology at Northeastern, took speech out of the equation and studied a different form of language. In a new study, she looked at whether infants identified the rules of language in sign language.

Northeastern Professor of Psychology Iris Berent. Photo by Matthew Modoono/Northeastern University

Berent concluded that humans are in fact born primed for language. She found that infants—having never learned or witnessed sign languages before the study—can learn rules from sign language just as readily as they can from speech, and that their response is distinct from how they react to other kinds of visual patterns. The results were published in a paper last week in Scientific Reports.

“Human brains are language-ready,” Berent says. “They’re not just speech-ready, they’re language ready. They’re ready to learn language in all its different and diverse manifestations, whether it’s speech or sign. And it’s not just an artifact of living in utero for nine months. There is something apparently and possibly inherent about language that makes it particularly amenable to learning for human brains.”

In previous research, Berent found that infants so young they were still in the maternity ward can learn the rules of language from speech spontaneously. In that study, Berent and colleagues presented the newborns with patterns of vocalizations. Some of the strings of sounds contained two of the same sounds in repetition, such as “gah, gah, bah” (an AAB pattern). Others did not have any repetition, such as “gah, bah.” The researchers studied the babies’ brain activity and found that their neural response differed notably when the vocalizations were in a pattern that included repetition (AA or BB). This, Berent says, suggests that the babies were responding to what they perceived as a linguistic rule.

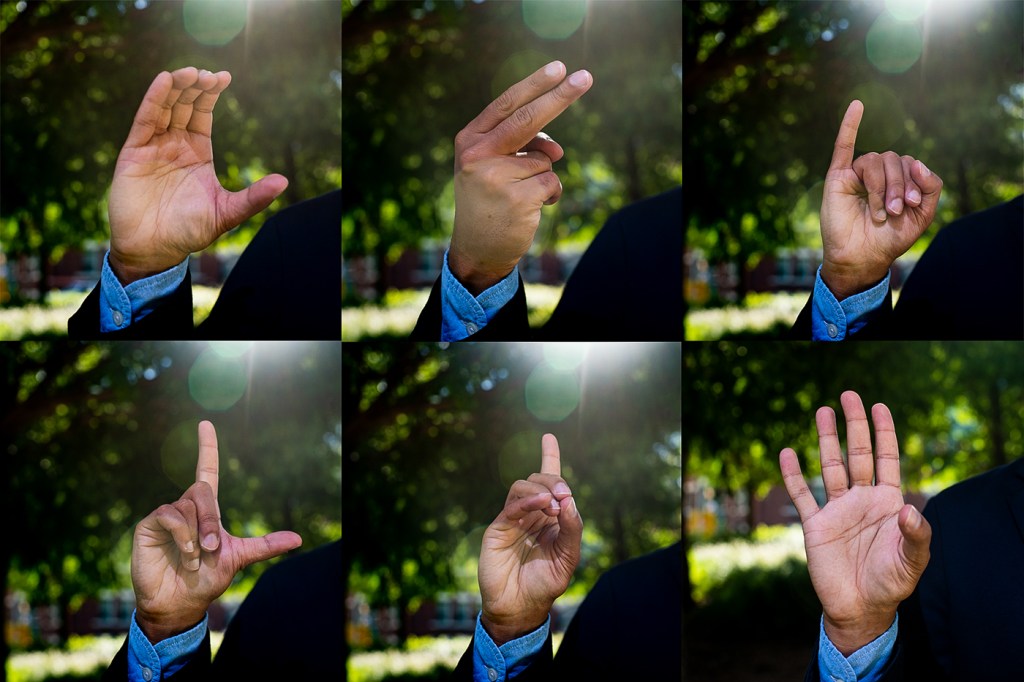

Berent applied the same procedure to her latest study, replacing vocalizations with sign language. The study, conducted at the Université de Paris and the French National Centre for Scientific Research by Berent’s longtime collaborator, Judit Gervain of the University of Padua, looked at the brain activity of 6-month-olds as they were presented with linguistic signs in two different kinds of sequences: one with two identical syllables (AA), and one with non-identical syllables (AB).

Like in the vocalization study, the repetitive pattern (AA) signs elicited a greater response in the infants’ brains than the AB sequences. That activity also occurred in the same area of the brain.

“The pattern in speech and sign was indistinguishable,” Berent says. “So the brain responded to the rules equally and exactly in the same way for speech and sign.”

The researchers wanted to be sure that they weren’t just identifying the babies’ ability to identify visual patterns more generally, but that there was something special about language. So they also presented the babies with visual analogs of signs.

To do that, the scientists made a graphic animation of a tree. On the tree were leaves that took a similar shape to human hands. They mapped the motions of the same sign language syllables used in the first part of the experiment onto those leaves. The idea was to match the dynamic properties of the signs but remove the human communication aspect and turn it simply into visual patterns.

The infants’ brain activity was reversed in response to the analogs. The AB sequences elicited a greater neural response than the AA sequences.

“The brain responds differently for signs and for really closely matched controls, which shows that it is not the visual things about sign,” Berent says. “It’s really the linguistic thing that kind of gets the brain going there.”

Berent says that while her team’s results weigh in on the nature versus nurture debate around language, “what we’re saying here is a more nuanced claim.”

“We think about language either as something we’re born with or we’re learning,” she says. “What we’re saying is this language instinct is the ability to learn. It’s a very special type of learning mechanism. It’s really kind of narrowly tuned to language.”

Think about a can opener, she says. You’re not born with the can you want to open, but you’re born with that tool. In this case, Berent says, “You’re born with the tool to do something very specific. And for us, it’s learning language.”

For media inquiries, please contact Shannon Nargi at s.nargi@northeastern.edu or 617-373-5718.