Facebook has already decided how you’re going to vote

Facebook is wielding significant power over political discourse in the United States, thanks to an ad delivery system that reinforces political polarization among users, according to new research from a team of computer scientists.

The study, published this week by researchers from Northeastern University, the University of Southern California, and the nonprofit technology organization Upturn, shows for the first time that Facebook delivers political ads to its users based on the content of those ads and the information the media company has on its users—and not necessarily based on the audience intended by the advertiser.

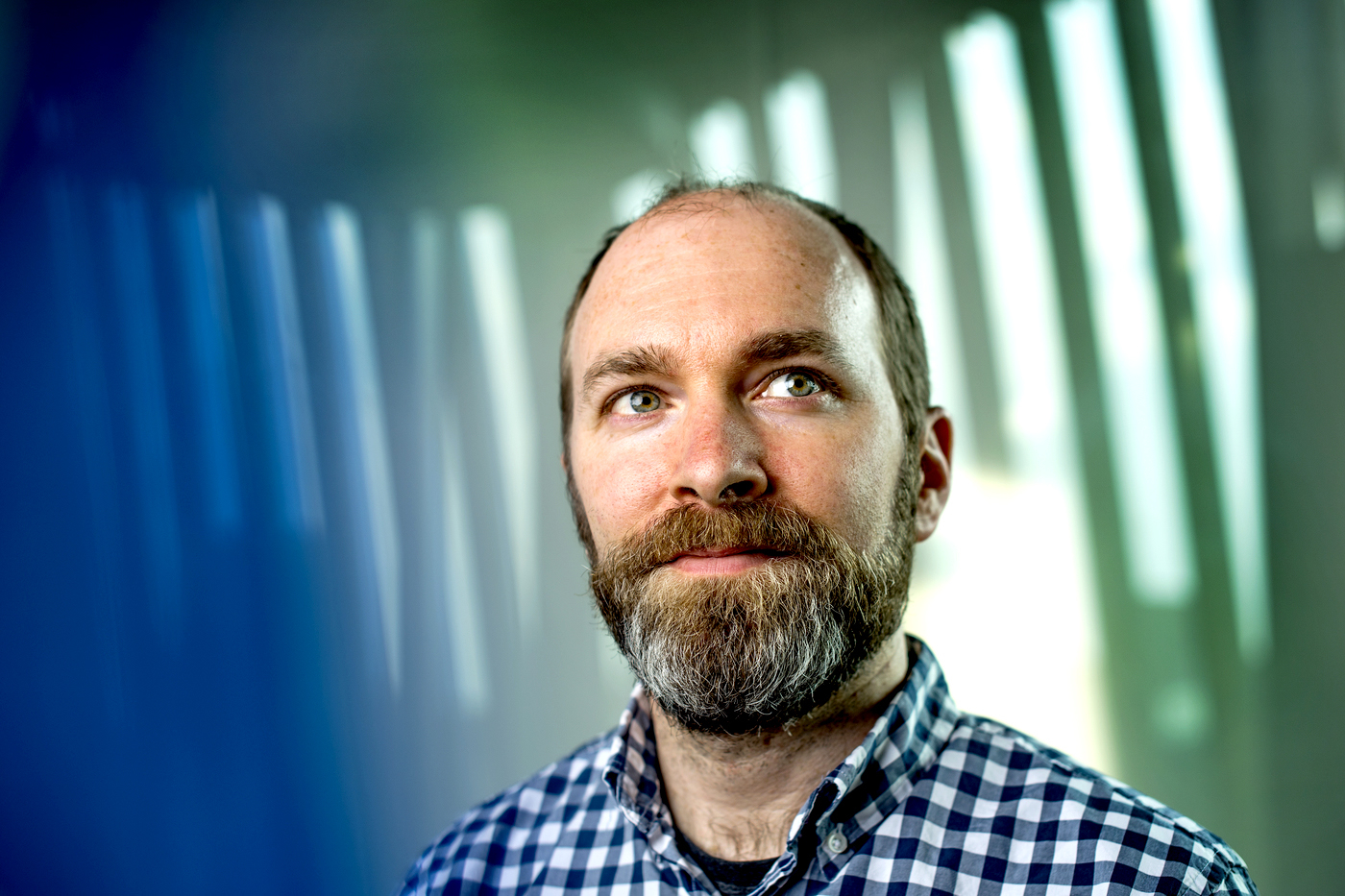

“We found that Facebook will disproportionately deliver an ad to the users who [Facebook] believes agree with the ad, based only on the content,” says Alan Mislove, a professor of computer science at Northeastern and one of the authors of the paper.

Mislove says the findings have grave consequences for democracy in the U.S. Facebook is one of the world’s largest advertising platforms, and its ad delivery system is creating information filter bubbles for its users, the research shows. It reveals that citizens are being served ads that reinforce their existing political beliefs, and being excluded from seeing ads that challenge those beliefs.

In a statement to The Washington Post, a spokesman for Facebook disputed the gravity of the findings.

“Findings showing that ads about a presidential candidate are being delivered to people in their political party should not come as a surprise,” Facebook spokesman Joe Osborne told the Post. “Ads should be relevant to the people who see them. It’s always the case that campaigns can reach the audiences they want with the right targeting, objective, and spend.”

But Mislove says this is an oversimplification.

“I don’t think most people understand the level of optimization that’s taking place in online advertising,” he says. “When Facebook is optimizing ads for relevance, they’re also optimizing for Facebook’s profit margin.”

Facebook, like many of the biggest digital companies, keeps its algorithms under lock and key. So, in order to understand how advertisements are delivered to users, Mislove and his colleagues—a team that also included Northeastern doctoral candidates Muhammad Ali and Piotr Sapiezynski—posed as political advertisers.

The researchers spent more than $13,000 on a set of advertising campaigns that they used to test how Facebook promotes political messaging.

They focused on creating ad campaigns for U.S. Sen. Bernie Sanders, a Democrat, and President Donald J. Trump, a Republican. At the time of the experiment (early July 2019), the real Sanders and Trump camps had spent the most money on Facebook advertising among major candidates from either party, and therefore the researchers felt comfortable that their relatively small advertising budget wouldn’t influence either Sanders’ or Trump’s election performance.

The researchers largely repurposed real ads by both campaigns to test Facebook’s ad delivery system, but with careful attention to the targeted audience. They created specific audiences with public records in North Carolina and Facebook’s own demographic information to sort people by political party affiliation.

Facebook and other online advertising platforms give advertisers a variety of tools to target precise audiences—a practice called “microtargeting” that’s being reconsidered by some of the biggest media companies, including Twitter and Google. (The researchers note that Facebook is also considering changes to its policy.)

With microtargeting, advertisers can zero in on particular demographics in an attempt to get their ad in front of exactly who they want to see it. They can also choose among different objectives, such as displaying the ad to the largest number of users, which was what the researchers chose for their ads.

One of the issues the researchers uncovered in their study is the relatively limited effect such targeting options actually have on the audience, however, compared to Facebook’s in-house system for determining the “relevance” of an ad, Mislove says.

This system, which is a proprietary algorithm that Facebook keeps secret, is how Facebook determines who sees a certain ad and who doesn’t, Mislove says. And, in an attempt to optimize the success of the ad, Facebook’s algorithm is delivering it to people it believes are predisposed to like it.

Such optimization isn’t unique to political ads, Mislove says; it’s likely the same system Facebook uses to determine the relevance of every advertisement on its site.

Mislove says it might not be a problem for marketing ads or political ads that are intended to raise money by appealing to a campaign’s base, but it is a problem for an ad that’s intended to change the mind of a voter who’s not already on board with the message.

In one case, the researchers found that when they targeted an audience of users defined by Facebook to have “likely engagement with US political content (Liberal)” and an equal audience of people who have “likely engagement with US political content (Conservative),” 60 percent of the liberal users saw their Democratic ads, and only 25 percent saw the Republican ads.

In another ad run, researchers pushed out Sanders and Trump ads at the same time to a conservative audience. All else being equal, the Trump ad was delivered to 21,792 conservative Facebook users, and the Sanders ad to 17,964 conservative users—almost 20 percent fewer people.

The researchers also found that if a political advertiser wanted to overcome this ideological divide, the advertiser had to pay more for the ad. In the most extreme cases, this meant paying as much as two- or three times more for an ad, Mislove says.

When the researchers sent out a neutral ad that encouraged people to register to vote, it reached a much more balanced proportion of liberal and conservative Facebook users, despite all other constraints being the same.

For Mislove, the results illustrate a broader problem in society today—the sheer amount of influence that unseen and unregulated algorithms have on everything we do.

“Whether you’re browsing Facebook or using Google Maps, there’s an algorithm that’s optimizing everything you see online,” he says. “And there’s very little accountability, and very little transparency, about how these algorithms determine what that optimization looks like. What I’m thinking about is how we can measure these things, and how we can audit them.”

For media inquiries, please contact Mike Woeste at m.woeste@northeastern.edu or 617-373-5718.